Giant AI data centers have become the backbone of modern artificial intelligence, but their growing size and energy demands are causing serious concerns. From mountains of electronic waste to staggering water consumption—especially in arid regions—the environmental impact is undeniable. The mining of rare Earth elements for these massive systems often involves destructive practices and human rights violations. Even more striking is their energy usage: while the human brain is remarkably efficient, a standard desktop computer uses only about 20 watts, yet AI data centers consume electricity on an epic scale.

Beyond environmental concerns, these colossal data centers have triggered a global supply-chain crunch. High-performance GPUs, essential for training and running advanced AI models, are in short supply, driving up costs and limiting accessibility. Even the most ambitious companies racing toward artificial general intelligence struggle to satisfy the insatiable computational demands of these centralized systems.

Read More: https://newsokay.com/qualcomm-boldly-challenges-nvidia/

The Rise of Local AI Computing

A promising alternative to massive cloud-based AI is running models locally—right on your own computer. Local AI allows for greater control over data privacy and sovereignty, but it comes with limitations. Large AI models require immense computing power, and running them on a single machine can strain hardware capabilities.

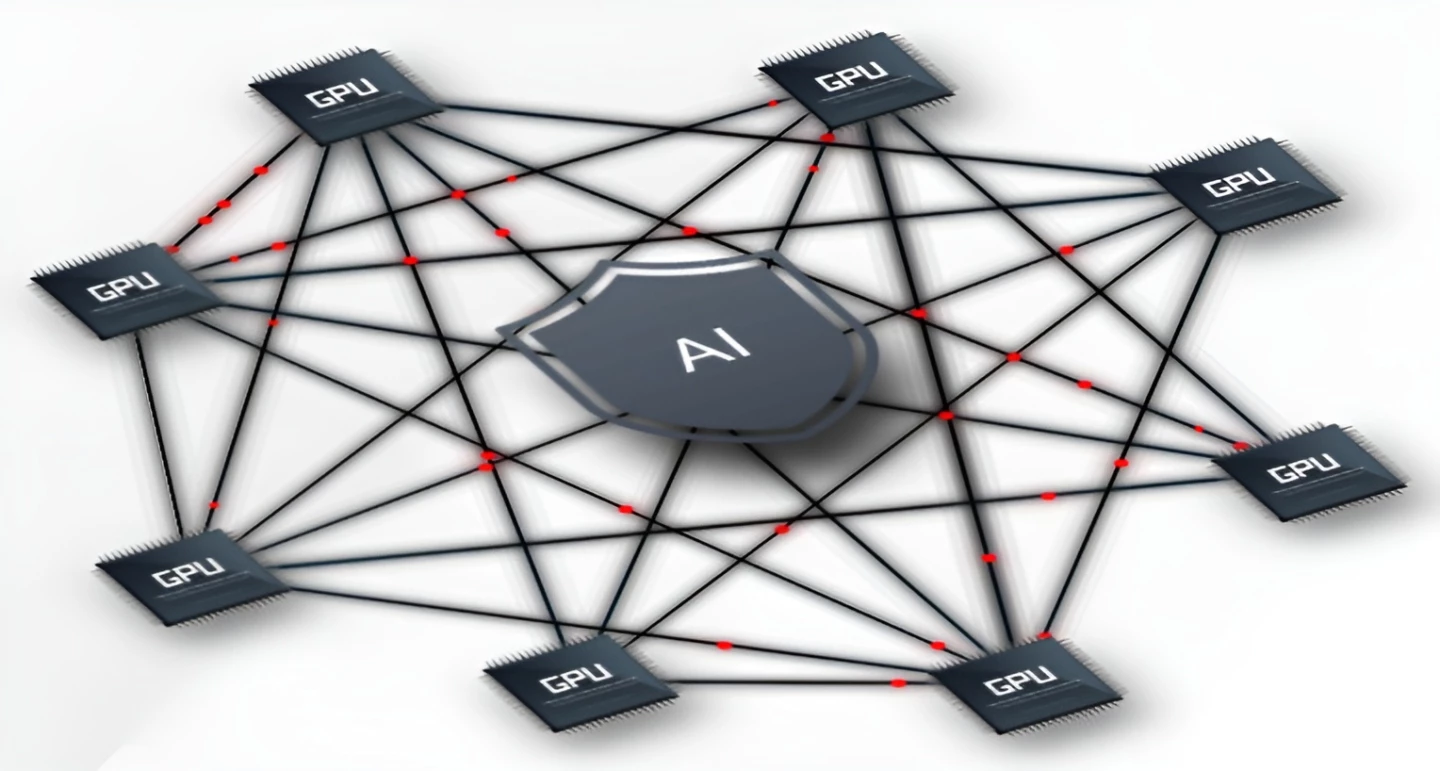

This challenge inspired researchers at Switzerland’s École Polytechnique Fédérale de Lausanne (EPFL) to explore distributed AI computing. By spreading AI workloads across a network of ordinary desktop PCs, they developed a system that bypasses the need for centralized “Big Cloud” infrastructure.

Introducing Anyway Systems

EPFL researchers Gauthier Voron, Geovani Rizk, and Rachid Guerraoui launched Anyway Systems, a software solution that enables AI models to run on networks of consumer-grade computers. The system downloads open-source AI models—like ChatGPT—and processes queries locally, while still supporting global AI functionality.

“For years, people assumed large AI models required enormous resources and that privacy, sovereignty, and sustainability were compromised as a result,” explains Rachid Guerraoui, head of EPFL’s Distributed Computing Lab. “Our approach proves that smarter, more efficient methods are possible.”

By distributing processing across a small local network, Anyway Systems can handle models such as ChatGPT-120B using as few as four standard computers. The software self-stabilizes to maximize local hardware performance. While inference might be slightly slower than a centralized data center, accuracy remains uncompromised.

ChatGPT-120B: High Performance, Minimal Hardware

ChatGPT-120B is a powerful reasoning model, ranking just behind OpenAI’s o3 model in coding, mathematics, and health benchmarks. Despite its capabilities, it can run on relatively modest hardware setups. Users can access the web, write Python code, and perform complex chain-of-thought tasks—all without relying on massive server arrays.

Setting up Anyway Systems is straightforward, taking roughly 30 minutes. By keeping processing local, users retain complete control over private data. Companies, NGOs, and governments can safeguard their information, avoiding potential ethical or legal pitfalls associated with centralized data storage.

Scaling AI Without Big Tech

While home users would need multiple high-spec PCs, small companies or organizations might already have sufficient hardware to implement the system. Guerraoui emphasizes, “We can run everything locally, download our open-source AI of choice, tailor it to our needs, and maintain control without depending on Big Tech.”

Some may compare this to Google AI Edge, which allows AI tasks on individual mobile devices. However, Guerraoui points out that Google’s solution is limited to small models optimized for phones. It cannot distribute large models across multiple users in a fault-tolerant, scalable way. Anyway Systems, on the other hand, can manage hundreds of billions of parameters using just a few GPUs.

Overcoming Single-Machine Limitations

Many existing local AI solutions—such as msty.ai or Llama—operate on a single machine, creating a single point of failure. Powerful models typically require extremely expensive GPUs, like Nvidia’s 80 GB H100, which alone can cost upwards of $90,000 due to supply shortages, despite a retail price of $40,000. Achieving high computational power on consumer PCs traditionally demands a dedicated team to manage and maintain the system. Anyway Systems automates this process, making it robust, transparent, and easy to maintain.

Benefits of Distributed Local AI

While distributed systems like Anyway cannot entirely replace data centers, they offer significant advantages:

- Energy Efficiency: By using existing hardware, distributed AI reduces the need for energy-intensive server farms.

- Data Privacy and Sovereignty: Local processing keeps sensitive information within an organization, reducing reliance on third-party cloud providers.

- Cost-Effectiveness: Instead of investing in high-end GPUs or data center infrastructure, organizations can leverage existing resources.

- Scalability: Networks of standard PCs can collectively run powerful AI models, scaling capabilities without centralized bottlenecks.

These benefits make distributed AI an appealing choice for companies, research labs, and even governments seeking to maintain control over their data while embracing advanced AI technology.

A Future Beyond Big Data Centers

The emergence of systems like Anyway signals a shift in AI infrastructure. While massive data warehouses will continue to play a role—particularly for training new models—distributed networks offer a viable way to democratize access to high-powered reasoning models. By spreading processing across smaller, locally managed networks, organizations can enjoy the power of AI without the environmental, ethical, and financial costs of traditional data centers.

Guerraoui concludes, “Distributed, frugal, and intelligent computing strategies show that we can have large, capable AI models without sacrificing sustainability or privacy. This is a new chapter in AI deployment—one where organizations control their own AI destiny.”

Frequently Asked Questions:

What does it mean for AI reasoning models to run without massive data centers?

It means that advanced AI models can now operate on smaller, local networks of standard computers instead of relying on large, energy-intensive cloud data centers. This approach distributes processing tasks, making AI more accessible, efficient, and environmentally friendly.

How is running AI locally different from using cloud-based data centers?

Local AI runs directly on consumer-grade PCs or small organizational networks, keeping data private and reducing dependency on expensive, power-hungry cloud servers. Cloud-based AI, in contrast, requires massive infrastructure, high-powered GPUs, and consumes significantly more electricity.

Are local AI models as powerful as those running in data centers?

Yes. With distributed computing, even complex models like ChatGPT-120B can perform reasoning, coding, and other advanced tasks with comparable accuracy. The main trade-off may be slightly slower response times due to local hardware limitations.

Who can benefit from this technology?

Small and medium-sized companies, NGOs, research labs, and even individuals with multiple PCs can leverage local distributed AI. It’s particularly useful for organizations prioritizing data sovereignty and sustainability.

What hardware is required for distributed AI?

While a single PC might struggle with large AI models, a network of consumer-grade PCs (sometimes as few as 4 for models like ChatGPT-120B) can handle demanding tasks efficiently. Specialized GPUs may not be necessary for inference tasks.

Does this technology replace data centers completely?

Not entirely. Training new AI models still requires significant computational power typically provided by data centers. However, local distributed AI is ideal for inference, testing, and daily operational use without massive infrastructure.

How does distributed AI impact sustainability?

By using existing hardware instead of energy-intensive warehouses, distributed AI reduces electricity consumption, lowers carbon footprints, and minimizes electronic waste associated with large-scale servers.

Conclusion

The era of massive, energy-hungry AI data centers is evolving. Revolutionary AI reasoning models can now run efficiently on local networks of standard computers, offering a sustainable, cost-effective, and privacy-conscious alternative. Distributed AI empowers organizations and individuals to harness the power of advanced models like ChatGPT-120B without sacrificing performance, data sovereignty, or environmental responsibility. As technology advances, this approach marks a significant step toward a more accessible and ethical AI ecosystem—bringing high-powered intelligence directly into the hands of those who need it, without reliance on colossal infrastructures.